Cards

Add to, or config your own rig. Passive, active and liquid cooling options.

Starting at $999

Watch TT-Deploy and learn more about Tenstorrent Galaxy™ Blackhole deployed at scale with industry-leading benchmarks.

Add to, or config your own rig. Passive, active and liquid cooling options.

Starting at $999

Whisper-quiet, liquid cooled - run models up to 120b parameters from your desk.

Starting at $9,999

Sovereign, scale-out servers for production AI.

Starting at $70,000

Accelerate your business with in-use, performant, and flexible IP without the vendor lock-in.

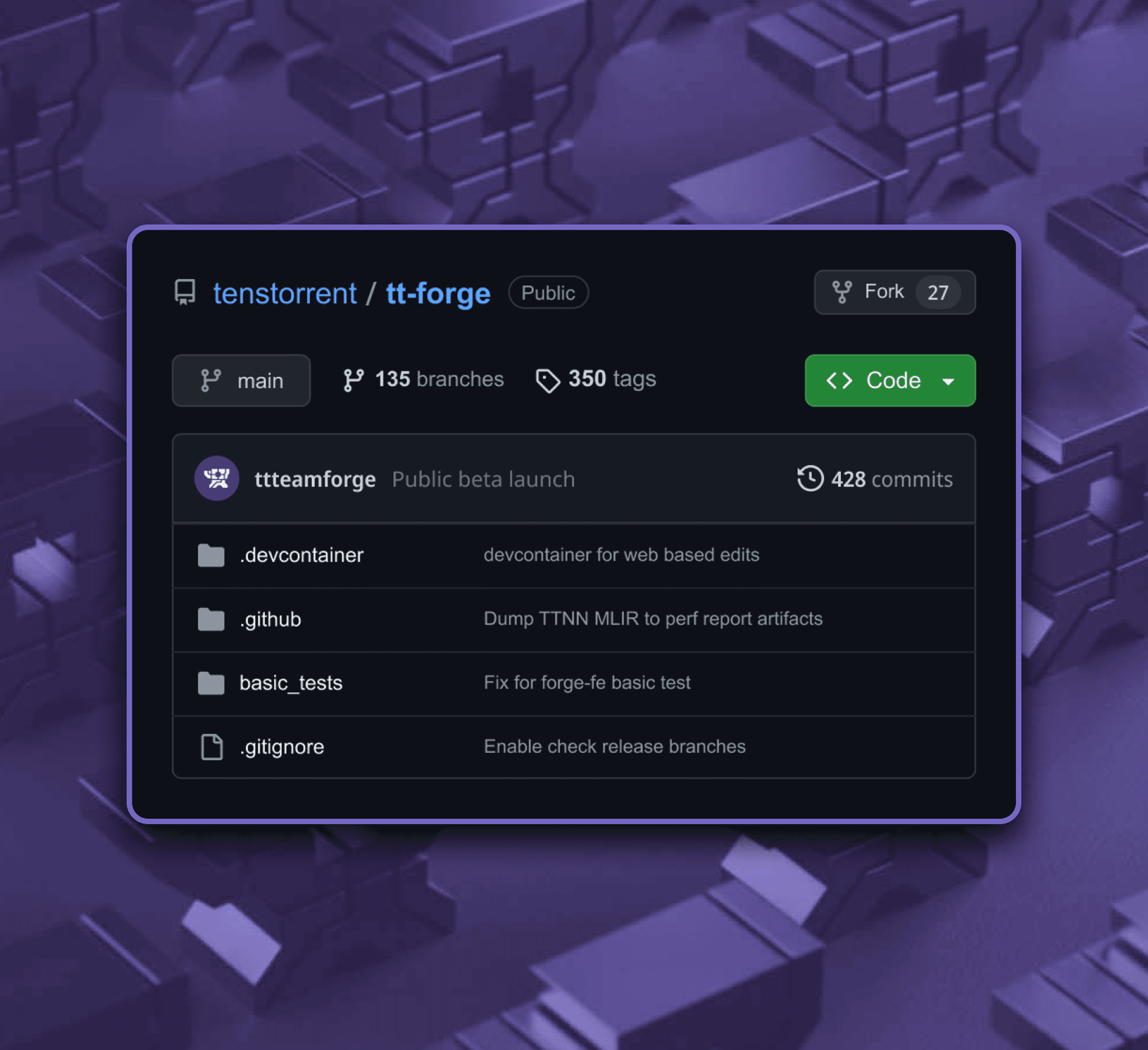

TT-Forge™ is Tenstorrent’s MLIR-based open-source compiler, built on top of our existing AI software stack, and designed to work with PyTorch, JAX, ONNX, and more. TT-Forge™ is now in public beta, and ready for feedback. File a PR or join the conversation on Discord.

AI is changing the laws that once governed computing. Our IP is transparent, our architectures are open, and our software is open source so you can edit, select, fork, and own your silicon future.

Get the latest info, ask questions, review our open-source repos.

Build an open future with us. Fix bugs, add features, get paid.