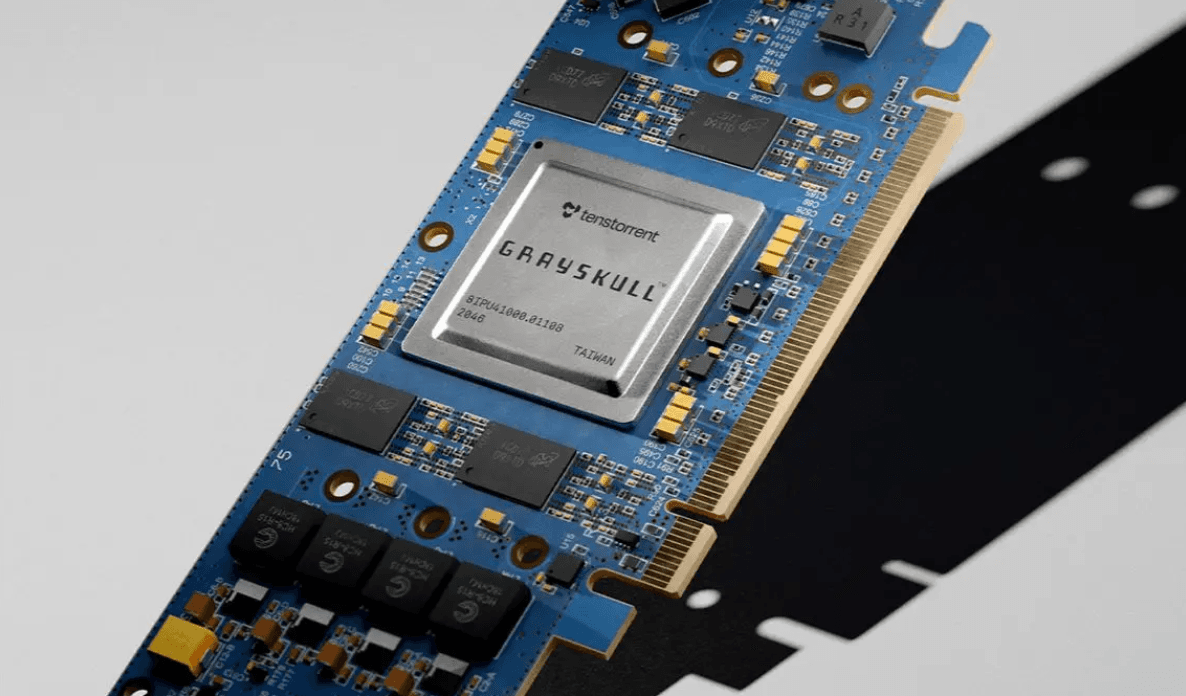

Attention in SRAM on Tenstorrent Grayskull

The Tenstorrent Grayskull architecture provides a large SRAM, distributed across a grid of cores. This work presents a fused kernel for Grayskull, that exclusively utilizes its large SRAM by combining matrix multiplication, attention score scaling and Softmax operations. Additionally, a dedicated Softmax kernel utilizing the SRAM and a CPU implementation serving as a baseline are presented. The Softmax operation consumes most of the runtime in the computation of attention weights from queries and keys on Grayskull. The speedup of the dedicated Softmax kernel compared to the CPU implementation is up to 10×, and the Softmax implementation inside the fused kernel is approximately 1.8× faster than the dedicated Softmax kernel. The time and memory complexity of all implementations is quadratic in sequence length. Currently, the Grayskull e150 is approximately 30× cheaper for the general public than an Nvidia H100 PCIe (a state-of-the-art GPU) and offers approximately 1.5× more SRAM.

Read the full paper here + associated GitHub here.

Originally Posted on Arvix