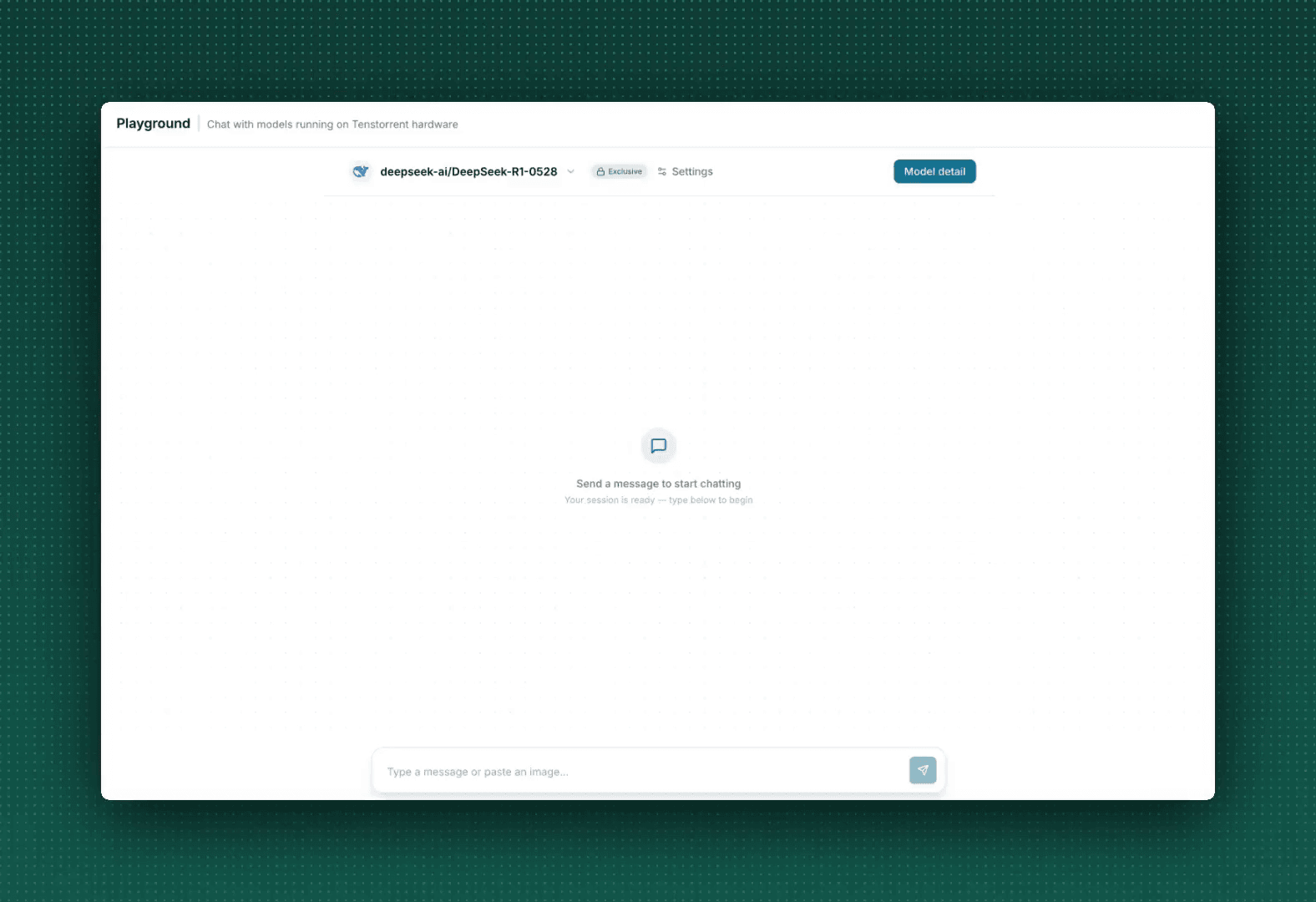

Fastest Large-Context LLM Inference

Tenstorrent Galaxy is optimized for premium, latency-sensitive AI workloads. Run superclusters for high margin AI use cases including agentic workflows, real-time systems, and long context reasoning. Utilize the same general-purpose AI Tenstorrent systems for decode and prefill.

Half the Time to First Token, 4x Output Speed

Half the Time to First Token

In Blitz mode, optimized for speed, Tenstorrent Galaxy supercluster parallelizes prefill across servers, efficiently overlapping data placement and data flow, with high utilization compute.

Time to First Token (sec), DeepSeek-R1-0528, 100k Context

GPUs

Tenstorrent

Source: Artificial Analysis; Nvidia Top 5 average Top 5 providers including Eigen AI, DeepInfra, Fireworks, Novita AI, Nebius

4x Output Speed

Decode on Tenstorrent Galaxy superclusters intelligently leverages on-chip SRAM and DRAM pipelined across servers, enabling scale out for big models with largest context for agentic workloads.

Output Speed (token/sec), DeepSeek-R1-0528, 100k Context

GPUs

Tenstorrent

Source: Artificial Analysis; Nvidia Top 5 average Top 5 providers including Eigen AI, DeepInfra, Fireworks, Novita AI, Nebius

Benefits

Fast

Effective parallelization across a large number of chips enables us to deliver the fastest large-context LLMs.

Networked AI

Utilize the same hardware for prefill and decode. Our Networked AI architecture unifies compute, SRAM and DRAM memory, and networking for general-purpose AI.

Scalable

Built to scale with supercluster configurations. GPU architectures are constrained by the box — Tenstorrent Galaxy scales past it.

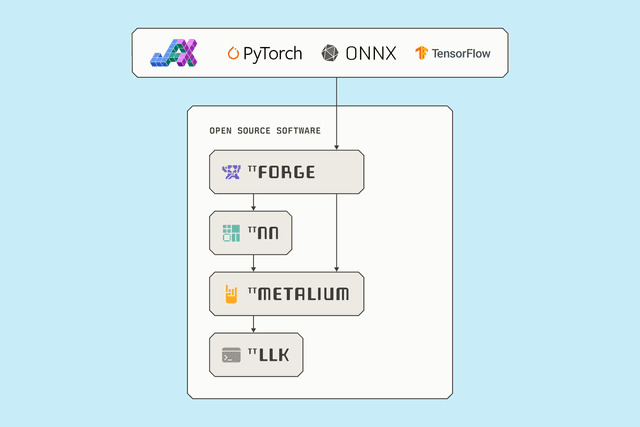

Open

No proprietary interconnects, switches, or HBM. Fully open-source end-to-end software stack. Deploy state-of-the-art models for your AI solutions.

Technologies

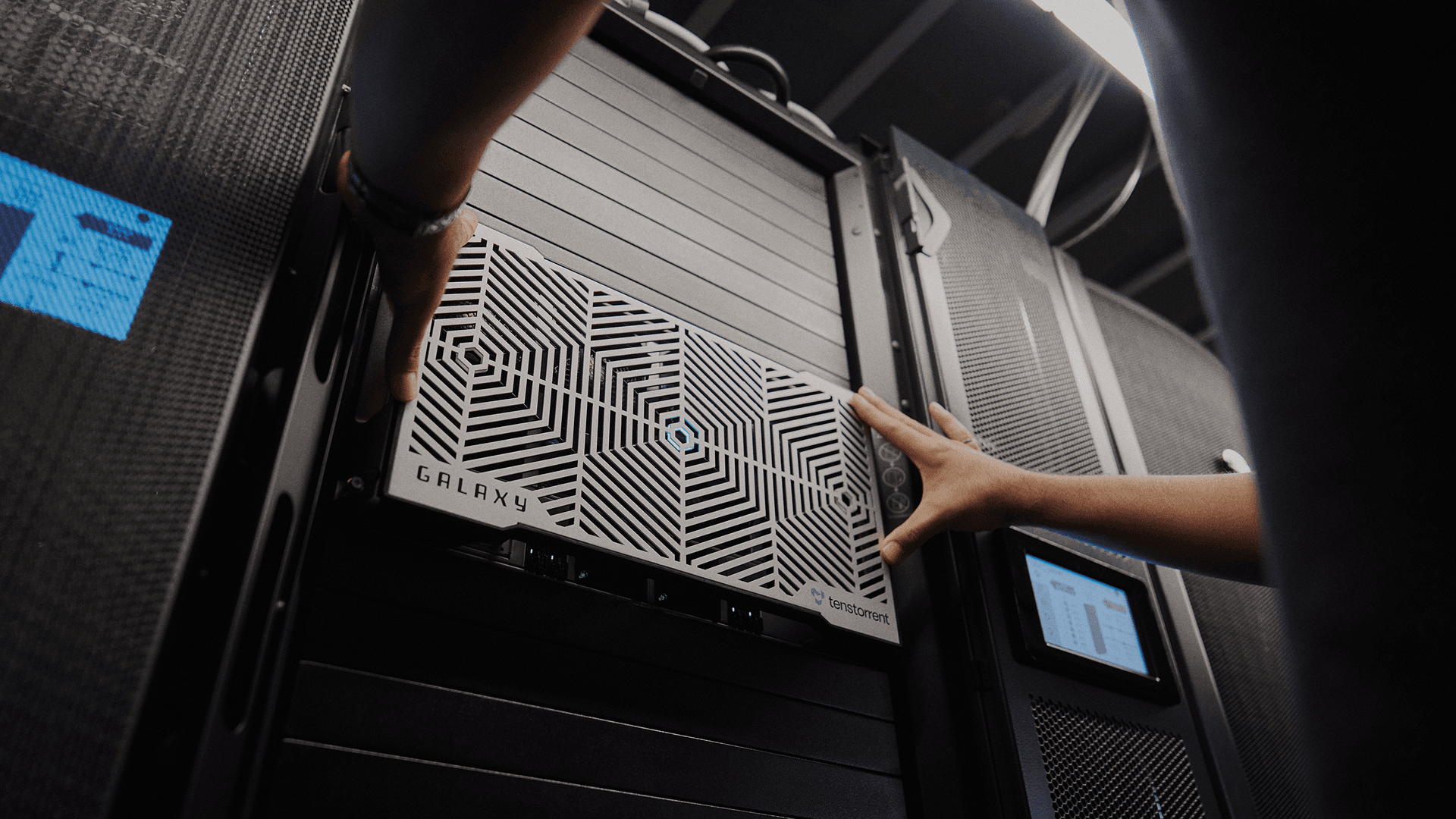

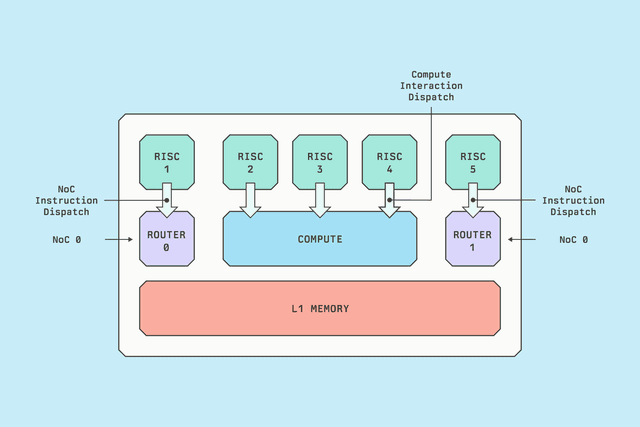

Blackhole Architecture for Production LLM Inference

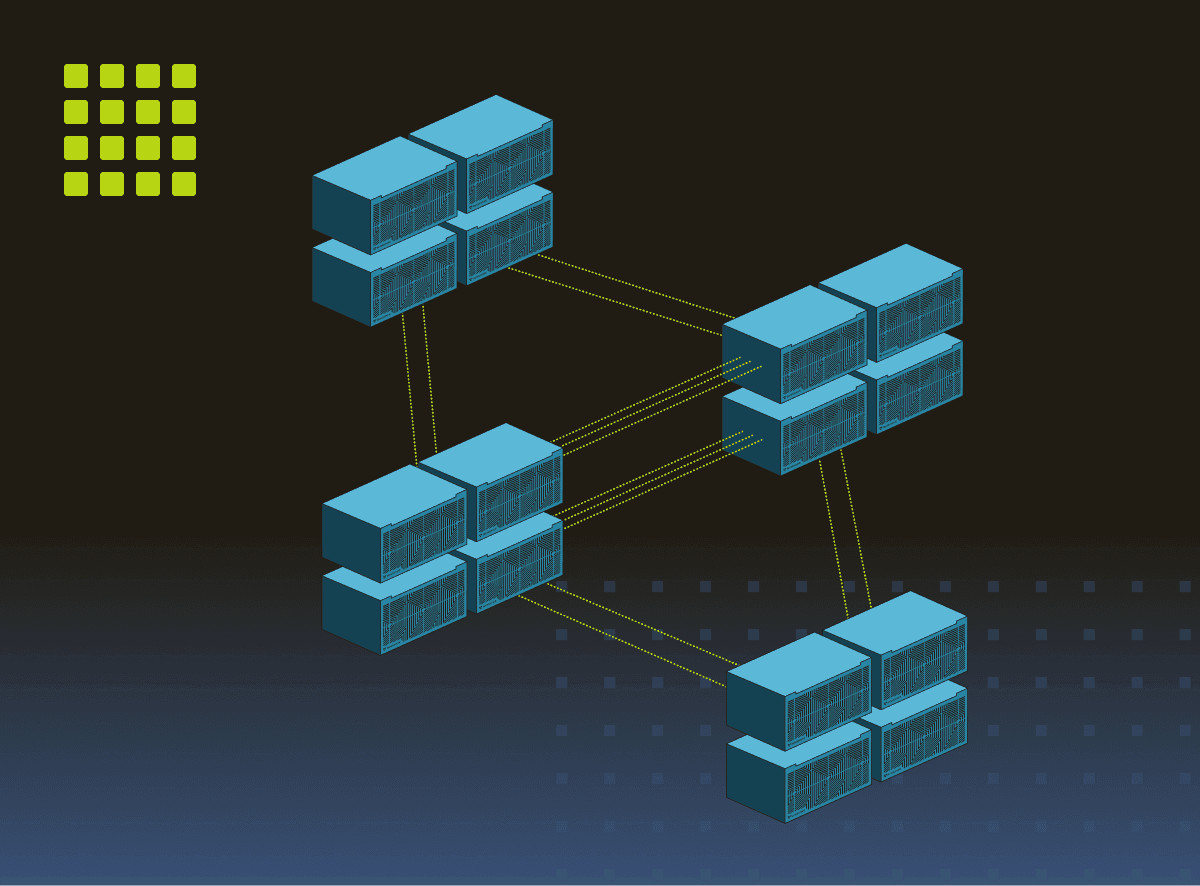

Tenstorrent Galaxy superclusters

Run anything – fast, affordable, simple. High-density, scalable compute. Add systems, add speed.

Tensix Cores

Purpose-built for parallel, continuous workloads. With 91x SRAM/capacity per dollar and 12x SRAM bandwidth per dollar, Tensix delivers where it counts.

Model Support

90% of models from HuggingFace just work and coverage is growing every day across LLMs, Image Gen, Speech, Vision, Embeddings, Encoders and more.

4 x Tenstorrent Galaxy™ Blackhole superclusters

Tenstorrent Galaxy™ Blackhole can be deployed in superclusters, extending into multi-server topologies that can scale-out to any size. Four Tenstorrent Galaxy™ superclusters lead the industry in performance and cost for large context LLM inference.