Tenstorrent Galaxy™ Blackhole

Starting at $110,000

Run anything with our scalable, ultra-dense AI server.

Tenstorrent Galaxy™ runs any workload, both training and inference. It leads the industry in video generation, decode, and prefill benchmarks, and TT-Forge, Tenstorrent's open-source MLIR-based compiler, supports more models than any competitor. Video, language, code — pick your model, run it fast.

Contact us for custom configurations and pricing

Starting at $110,000

Run anything with our scalable, ultra-dense AI server.

Starting at $440,000

A four Tenstorrent Galaxy Blackhole supercluster that scales up for fast and affordable AI solutions.

Starting at $70,000

Our Tenstorrent Galaxy server built with our previous generation chip technology, Wormhole. Still scalable, ultra-dense, performant.

Tenstorrent Galaxy Blackhole also comes in a supercluster of four, extending into multi-server clusters that scale. Configurations from 4 to 36 or more Tenstorrent Galaxy systems are optimized for workloads including AI video generation, large-scale LLM inference, and private AI infrastructure.

10x Faster Real-Time High-Quality Video

Run state-of-the-art video models and generate high quality videos faster on Tenstorrent Galaxy superclusters. Generate 720p, 81-frame video in 2.4 seconds.

Fastest Large-Context LLM Inference

Tenstorrent Galaxy is optimized for premium, latency-sensitive AI workloads. Run superclusters for high margin AI use cases including agentic workflows, real-time systems, and long context reasoning. Utilize the same general-purpose AI Tenstorrent systems for decode and prefill.

Accelerator Compute, Memory, and Connectivity

Accelerators

32× Blackhole® ASICs

Performance

23 PFLOPS Block FP8

Accelerator SRAM

6.2 GB @ 2.9 PB/s

Accelerator DRAM

1 TB GDDR6 @ 16 TB/s

Accelerator Fabric

10× 400 GbE links per ASIC for 32 TB/s

Cluster Scale-out

Up to 56× 800 GbE QSFP‑DD ports for 11.2 TB/s

Host Compute, Memory, and Connectivity

Host CPU

1× AMD EPYC 9004 (Zen 4), up to 32 cores, ≤280 W TDP

Host Memory

Up to 576 GB (6× 96 GB) DDR5-4800 ECC RDIMM (6 slots, 0 free)

Networking

1× OCP NIC 3.0 PCIe Gen5 x16 SFF (2× 200 GbE default configuration)

Management Network

1× Dedicated RJ45 1 GbE with baseboard management controller (BMC)

Storage OS

2× 960 GB M.2 2280 PCIe Gen4 x4 NVMe SSD

Storage Internal

Up to 4× E1.S PCIe Gen5 x4 NVMe SSD (9.5/15 mm)

Software

Ubuntu 22.04

Deployment & Operations

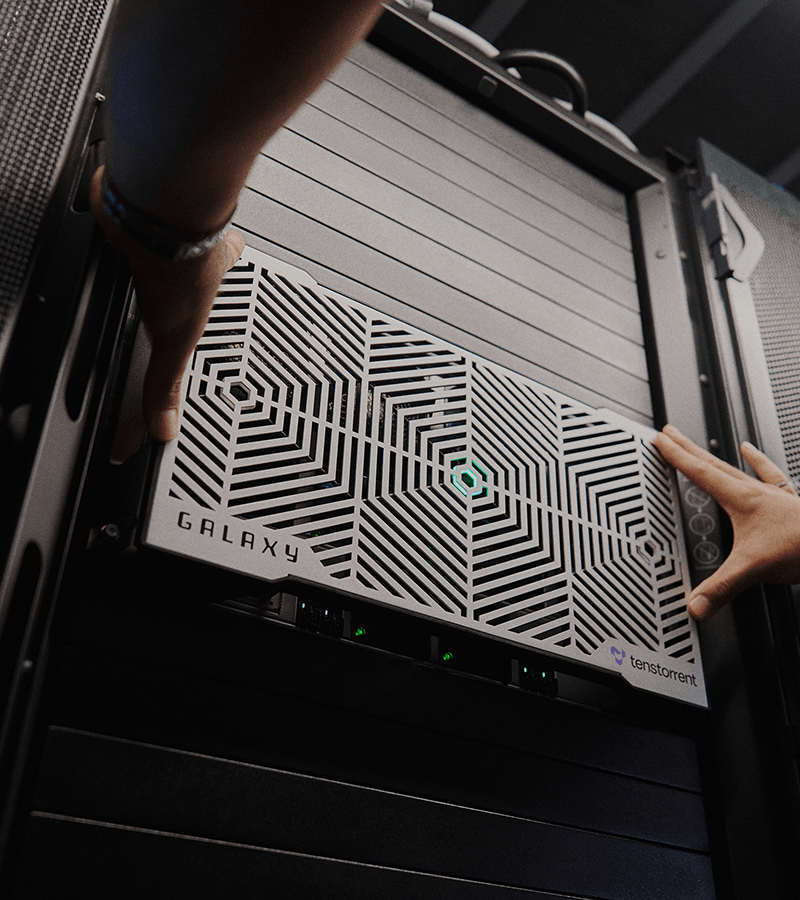

Form Factor

6U rackmount, air‑cooled chassis

System Dimensions

Height: 17.6 in (446.8 mm), Width: 10.4 in (263.4 mm), Length: 34.8 in (884.5 mm)

System Weight

262 lbs (119 kg)

System Power Usage

8 – 10 kW avg, 12 kW max (Max system power configurable up to 14.5 kW)

Operating Temperature

50 – 95 °F (10 – 35 °C)

Pricing

$110,000 list

More Models, Deploy Fast

90% of models from HuggingFace just work and coverage is growing every day across LLMs, Image Gen, Speech, Vision, Embeddings, Encoders and more. Our hardware supports rapid model bring-up, enabling customers to deploy production AI systems.

Simple Scale

The underlying Tensix Neo™ architecture is designed to scale from one chip to thousands under one programming model. It’s the same mesh of cores communicating the same way, whether they’re on the same die or across a rack connected by Ethernet. Scale to fit your needs, big or small.

Yours, End to End

Tenstorrent's full software stack, compiler to kernel, is open source. Compile a model and run it out of the box, or go deeper and tune the kernels directly. No black boxes at any layer.